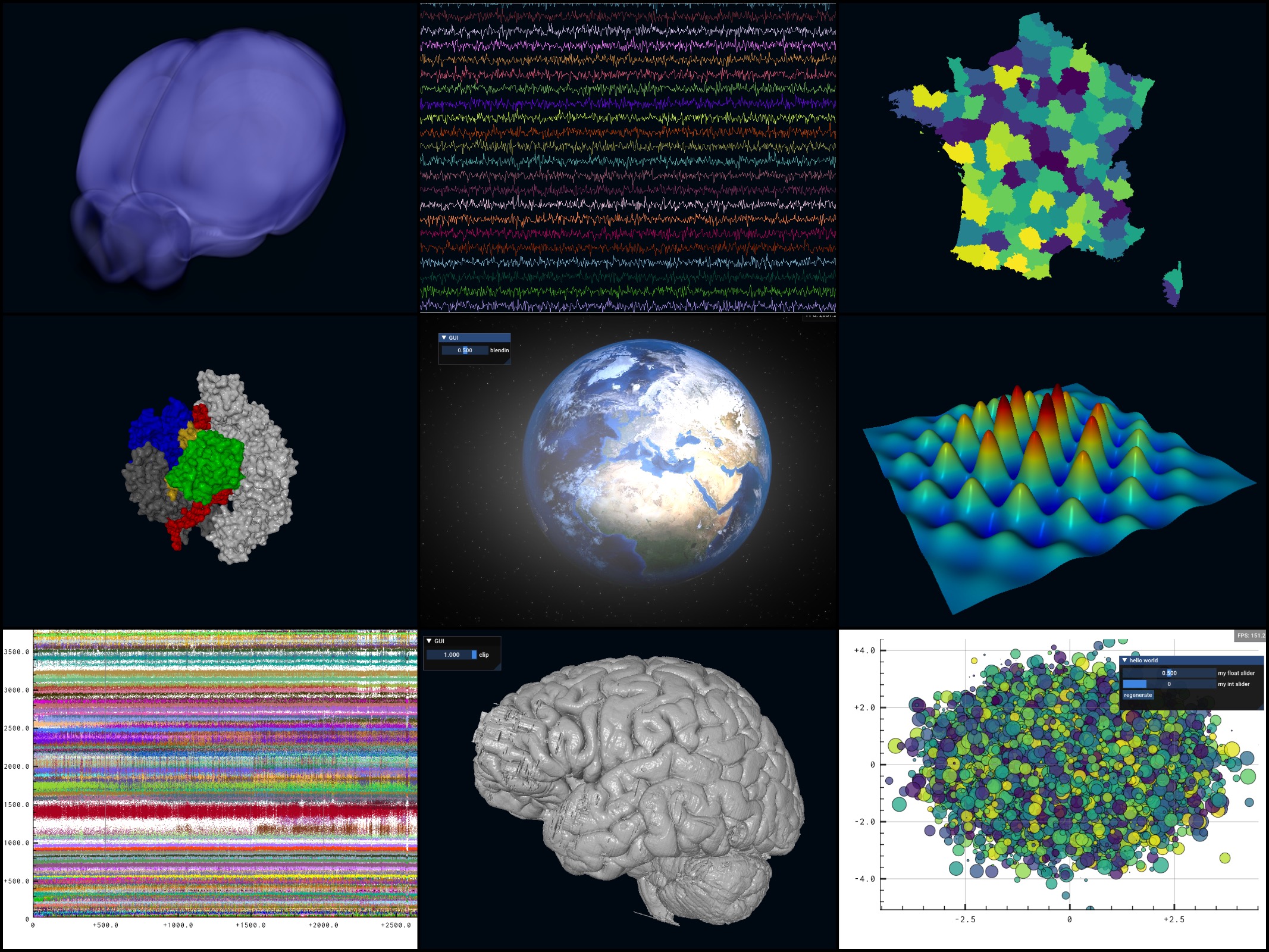

Datoviz: ultra-fast GPU scientific visualization with Vulkan

2021-02-16

I'm excited to present the project I've been working on at the International Brain Laboratory (IBL). Datoviz is an early-stage open-source high-performance GPU scientific visualization library based on Vulkan, the Khronos cross-platform low-level graphics API, which is 5 years old today!

Datoviz aims at providing a unified, language-agnostic platform for interactive visualization in both 2D and 3D, with support for GUIs and general-purpose GPU compute.